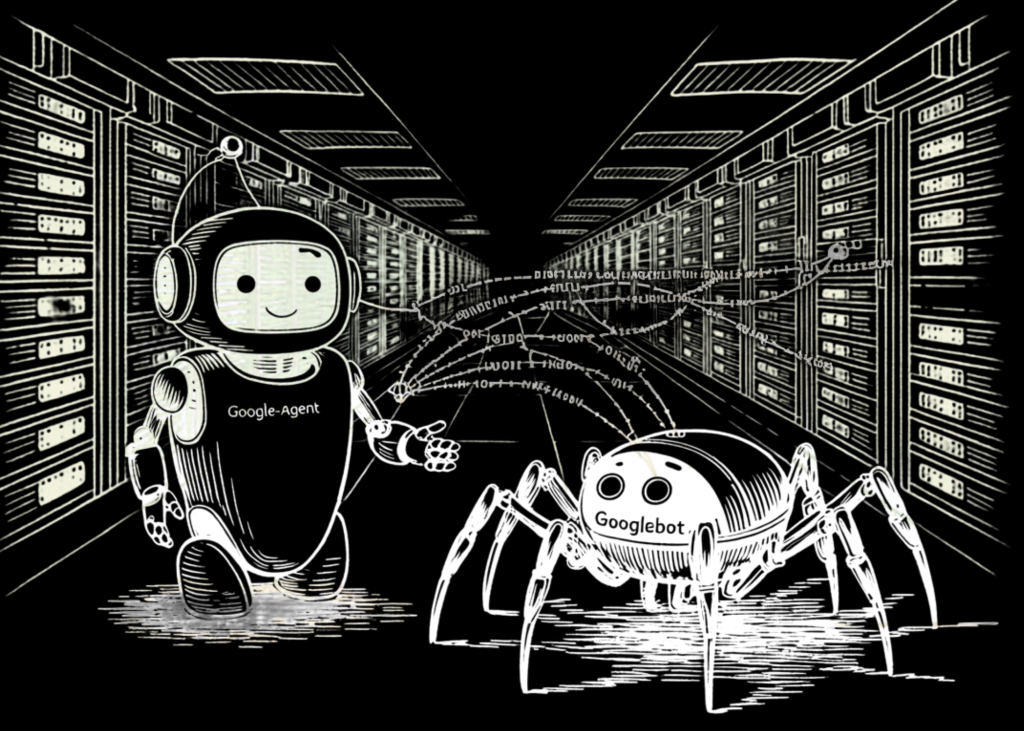

A new Google agent appears in server logs. Developers must understand it to distinguish between automated indexers and real-time, user-initiated requests.

The Big Picture

Google-Agent is a technical entity operating under a different set of rules and protocols. Unlike the autonomous crawlers that have defined the web for decades, this agent only acts when a user performs a specific action.

The core distinction is between crawlers and fetchers. Autonomous crawlers like Googlebot discover and index pages on a schedule determined by Google's algorithms. User-triggered fetchers like Google-Agent are utilized by Google AI products to fetch content from the web in response to a direct user prompt.

“Google-Agent ignores robots.txt because fetches are initiated by human users requesting to interact with specific content.”

Why It Matters

For software engineers managing web infrastructure, the rise of Google-Agent shifts the focus from SEO-centric 'crawl budgets' to real-time request management. Observability becomes critical: modern log parsing should treat Google-Agent as a legitimate user-driven request.